MTU develops diesel engines that are deployed in civil nuclear power plants as drivers for emergency generators to generate electrical power. Such engines are available to the market diversely as either common-rail or fuel-rack controlled engines with capabilities to produce up to 7 MW electrical power per unit. In case of failures in the electrical grid of a nuclear power plant one or more of these units are requested to provide power to support the capability to control the nuclear plant core and cooling systems. It is obvious that the functional contribution may be mission-critical to the overall plant.

The engines are controlled by an MTU-developed digital engine control unit (ECU). This ECU performs only safety functions and in particular maintains the safe state requested by the plant operator. This safe state ensures that the engine stands still if required and is controlled to maintain the demanded engine speed if required.

Software decomposition

The software of the ECU runs on top of a runtime environment handwritten in assembler, specific to the controller in use. The C application is partly handwritten, party generated from SCADE models.

The handwritten C code implements a scheduler, a hardware abstraction layer, and self-supervision capabilities. The hardware abstraction layer polls physical sensor inputs, controls hardware actuators, and provides hardware-related self-supervision mechanisms which must not interfere with the two former objectives in fixed timing intervals. Such fixed intervals must be small enough to acquire all relevant events and to maintain sensor-acquisitioning sampling theorems.

The scheduler provides safe data and control flow interfacing between the concurrent hardware access thread and the main control loop. Such interfacing limits the amount of required race condition considerations and allows for maintaining safe timing constraints of the threads. Based on safe overapproximations of timing envelopes it is possible to prove that all scheduling constraints are always maintained.

The SCADE model provides the engine controller algorithms. The monolithic model strictly follows the synchronous paradigm by separating input acquisition, processing and generating output. The entire model execution is provided in SCADE which is a prerequisite to make further statements on the model integrity.

C was chosen as programming language because of the abundant availability of translators for the targeted PowerPC architecture. Code generators from model driven approaches to C are well introduced and the SCADE generator is validated to translate correctly to a defined language subset.

Development constraints

MTU’s software and development process comply with the international standards IEC 60880 and IEC 61508:2010, part 3 (SCL3 for software).

All C code is produced in a subset of ISO/IEC9899:1999 that is sufficient for all of the outlined application requirements. This version of the standard is so widely used that its deficiencies are well understood and compilers are more likely to fully comply. Emphasis is put on the objective to enhance robustness, to provide exactly one method to solve a problem and to avoid potentially error-prone constructs. The MISRA-C:2004 standard is a good starting point for choosing such a language subset. In addition, continuous research on actual and potential coding defects is taken into account. Lastly, the subset is formed by complementing cultural development among users and testers of the application in question in a structured process.

With each programming project, an assessment of perceived risks regarding frequency, potential consequences and chances of detection is carried out. All risk considerations are condensed into a set of in-house coding guidelines also reflecting the current project team’s language proficiency.

Data types are basically restricted to the use of integer arithmetics with as few type conversions as possible. Thus compiler behavior is as explicit as possible, mending some of the inherent type unsafety of C. Enums, unions and bit fields are not part of the language subset.

The language subset is also designed to be well covered by automatic checking tools such as AbsInt’s RuleChecker.

The past: Using a non-verified compiler

Compiling source code which becomes part of safety software in production use is an inherently critical task. MTU only uses critical tools in safety applications if such a tool has been developed within a structured process. It must provide sufficient evidence for reliable operation and user experience must have a positive record.

Historically MTU has used a traditional commercially available C compiler well proven in use. Use of this compiler requires some maintenance effort due to the sporadic appearance of new bugs. Each of these bugs requires evaluation and eventually code changes and changes of code review checklists for fully standard-compliant source code.

When such a proven-in-use compiler is removed from standard supplier support, there are two options:

- The supplier may offer the service to check if bugs in later compiler versions already existed in the used version. However, the supplier may charge substantial fees for such service.

- Alternatively the user may decide to qualify a newer compiler version with a commercial validation suite. This also induces substantial effort and external costs.

Neither of these alternatives is satisfactory.

The switch to CompCert

CompCert is the only formally verified optimizing C compiler. It is structured as a pipeline of 20 compilation passes that bridge the gap between C source files and object code, going through 11 intermediate languages. The frontend and backend compilation passes are all formally proved to be free of miscompilation errors, and as a consequence, so is their composition.

The proofs are very large, owing to the many passes and the many cases to be considered — too large to be carried using pencil and paper. Machine assistance is used in the form of the Coq proof assistant. Coq enables unambiguous specifications, proofs in interaction with the tool, and automatic re-checks of proofs for soundness and completeness. This achieves very high levels of confidence in the proof.

At 100,000 lines of Coq and six person-years of effort, CompCert’s proof is among the largest ever performed with a proof assistant.

“The striking thing about our CompCert results is that the middle-end bugs we found in all other compilers are absent. [...] CompCert is the only compiler we have tested for which Csmith cannot find wrong-code errors. This is not for lack of trying: we have devoted about six CPU-years to the task.”

2011 study by Regehr, Yang et al.

Integration

The ECU control software uses a limited set of timing interrupts which does not impair worst-case execution time estimations. The traditional compiler accepts pragma indications to flag C functions so they can be called immediately from an interrupt vector. The compiler then adds code for saving the system state and more registers than used in a standard PowerPC EABI function call.

CompCert does not accept this compiler-dependent pragma nor inline assembly, so MTU hand-coded this mechanism in separate assembly files. Such assembler code can be placed in the runtime environment module. Some system-state recovery contained in a fallback exception handler is also transferred to the runtime environment.

The strategy of using a minimum sufficient subset of C is fully confirmed, as only one related change to the source code was necessary.

Runtime error analysis

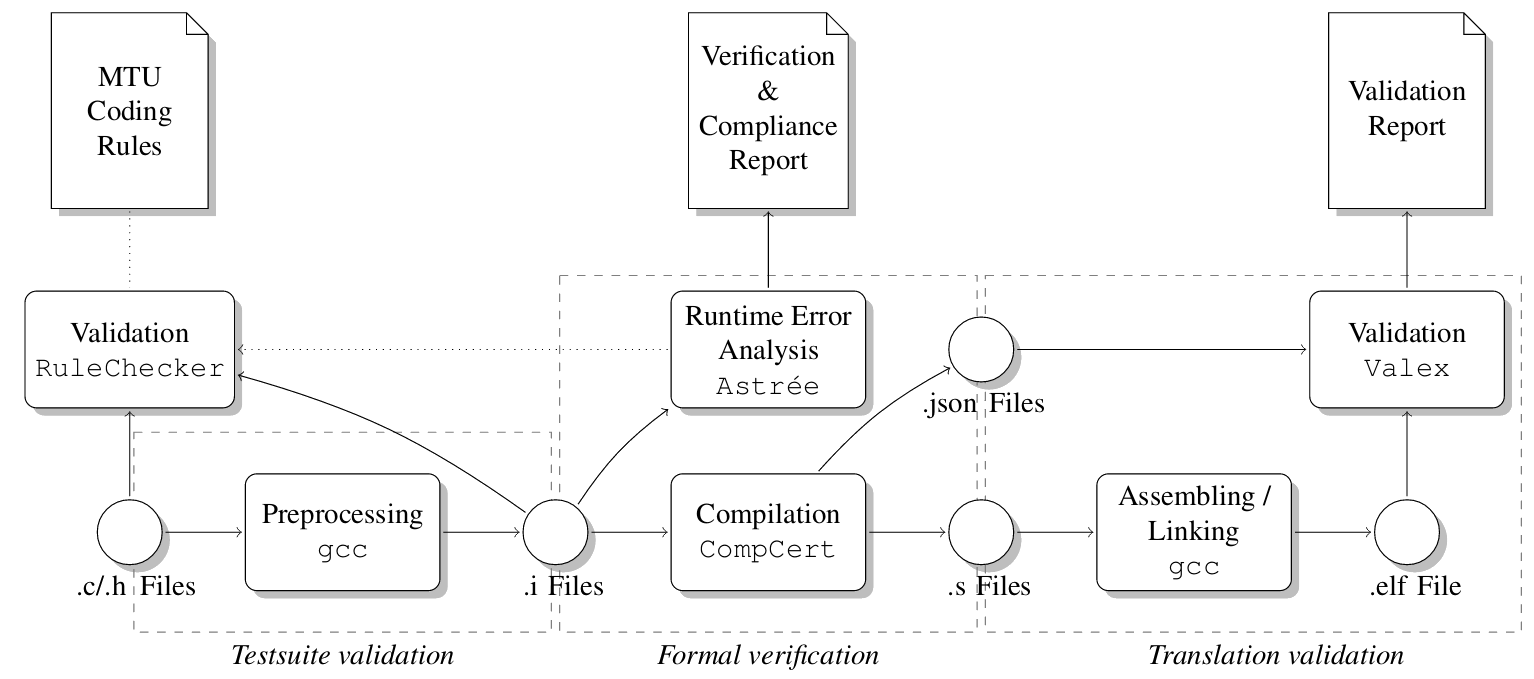

Behaviors undefined according to the C semantics are not covered by the formal correctness proof of CompCert. Only code that exhibits no numeric overflows, division by zero, invalid memory accesses or any other undefined behavior can possibly be functionally correct. Thus, MTU uses AbsInt’s sound static runtime-error analyzer Astrée to prove the absence of runtime errors including any undefined behaviors, and to complement the formal correctness argument of CompCert.

Checking coding guidelines

Astrée also provides the RuleChecker module for checking compliance to MISRA 2004 and 2012, ISO/IEC TS 17961, SEI CERT, CWE, computing code metrics and checking code metric thresholds. For MTU’s very own rule set, RuleChecker provides a coverage of more than 85%. The remaining 15% are objectives inevitably requiring human involvement, such as choosing understandable identifier names and providing helpful comments.

Translation validation

The verified part of the compilation tool chain ends at the generated assembly code. In order to check the actual ELF executable after the assembling/linking stage, MTU uses the Valex tool included with CompCert for automatic translation validation. The main goal is to verify that every function defined in a C source file compiled by CompCert and not optimized away by it can be found in the linked executable and that its disassembled machine instructions match the abstract assembly code. Additionally, Valex checks whether symbols are used consistently, whether variable size and initialization data correspond and whether variables are placed in the right sections in the executable.

The current MTU qualification setup using CompCert, Valex, Astrée, and RuleChecker.

Further minor modifications were necessary to adapt the build process to the CompCert compiler options. Also the linker control file required some changes since CompCert allocates memory segments differently from some traditional popular compilers. In the final step, an MTU-specific flashing tool assigns code, constant data as well as initialized and non-initialized data as required by the C runtime environment specific to the target architecture.

Code performance

The code generated by CompCert was subjected to the Valex tool and showed no indications of incompliance. The code was then integrated into the target hardware and extensively tested in a simulated synthetic environment, which is a precondition to using the integrated system on a real engine. If simulator test and engine test are passed they jointly provide behavioral validation coverage of every aspect of the functional system requirements.

All building processes were completed successfully. All functional tests passed.

To further assess the performance of CompCert, MTU investigated the size and the worst-case execution time of the generated code.

Memory consumption

The generated binary file was analyzed to determine the memory consumption by code and data segments.

- Compared to the conventional compiler, the code segment in the executable generated by CompCert is slightly smaller.

- The size of the data segment is almost identical in both cases.

These observations were consistent with the expectations since MTU used more aggressive optimization settings after switching to CompCert. The traditional compiler was configured not to use any optimization to ensure traceability and to reduce functional risks introduced by the compiler itself during the optimization stage. Thanks to CompCert, this restriction could be lifted.

CompCert performs register allocation to access data from registers and minimizes memory accesses. In addition, as opposed to the traditional compiler it accesses memory using small data areas. That mechanism lets two registers constantly reference base memory addresses so that address references require two PowerPC assembler instructions rather than three as before.

Worst-case execution time

The maximum execution time for one computational cycle is assessed with the static WCET analysis tool aiT that delivers safe upper execution time bounds. All concurrent threads are mapped into one computation cycle under worst-case conditions. The precise mapping definition is part of the architectural software design on the bare processor.

Analyses are performed on a normal COTS PC. Each entry (synchronous function, interrupt) is analyzed separately. Analysis of timing interrupt is split in several modes, and finally, the WCRT (worst-case response time) for one computational cycle is calculated.

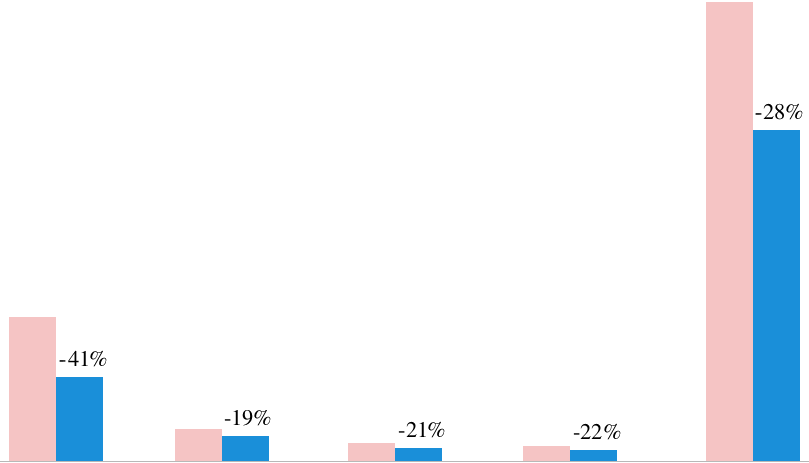

Worst-case execution times of various tasks compiled with

■ MTU’s conventional compiler and

■ CompCert

The computed WCET bounds lead to a total processor load which is about 28% smaller with the CompCert-generated code than with the code generated by the conventional compiler. The main reason for this behaviour is the improved memory performance. This result was consistent with MTU’s expectations and with previously published CompCert research papers.

Worst-case stack usage

MTU also determined a safe upper bound of the total stack usage in both scenarios, using AbsInt’s StackAnalyzer. The overall stack usage was found to be around 40% smaller with the CompCert-generated code than with the code generated by the conventional compiler.

The verdict

In November 2017, CompCert was successfully qualified by MTU Friedrichshafen according to IEC 60880, category A and IEC 61508-3:2010, SCL 3

for a certification project in the nuclear energy domain. The use of CompCert reduced

development time and costs.

“The executable code CompCert produces is proved to behave exactly as specified by the semantics of the source C program. [...] The main benefits are higher confidence in the correctness of the generated code, and significantly improved system performance.”

Further information

- Download the full paper, CompCert: Practical Experience on Integrating and Qualifying a Formally Verified Optimizing Compiler (PDF, 600kB), by D. Kästner, J. Barrho, U. Wünsche, M. Schlickling, B. Schommer et al., in ERTS² 2018 — Embedded Real Time Software and Systems, January 2018, Toulouse, France. <hal-01643290>.

- mtu-online.com

- info@absint.com

In the press

“The perfect compiler”, Elektronik automotive, September 2018, page 12 (PDF).