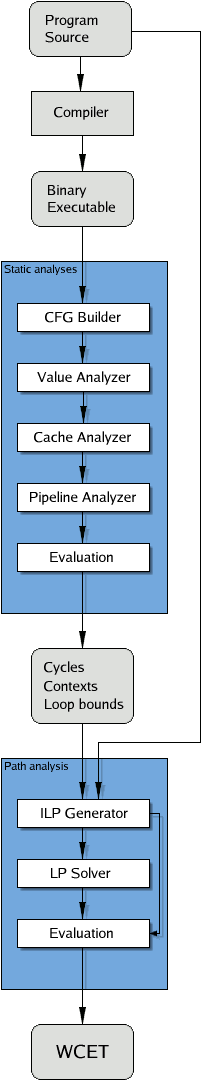

The WCET determination is composed of several different tasks.

- Reconstruction of the control flow from binary executables.

- Value analysis: computation of address ranges for instructions accessing memory.

- Cache analysis: classification of memory references as cache misses or hits.

- Pipeline analysis: predicting the behavior of the program on the processor pipeline.

- Path analysis: determination of the worst-case execution path of the program.

- Analysis of loops and recursive procedures.

Many of these tasks are quite complex for modern microprocessors and DSPs.

The arrangement of the tasks is described in the simplified picture to the right. The results of the value analysis are used by the cache analysis to predict the behavior of the (data) cache. The results of the cache analysis are fed into the pipeline analysis allowing the prediction of pipeline stalls due to cache misses. The combined results of the cache and pipeline analyses are used to compute the execution time of program paths.

The separation of the WCET determination into several phases has the additional effect that different methods tailored to subtasks can be used. In our case, the value analysis, the cache analysis, and the pipeline analysis are done by abstract interpretation, a semantics-based formal method for safe and sound static program analysis. Path analysis is done by integer linear programming.

Reconstruction of the control flow from binary programs

The starting point of our analysis framework is a binary program and optional additional user-provided information about numbers of loop iterations, upper bounds for recursion, etc.

In the first step a parser reads the compiler output and reconstructs the control flow. This requires some knowledge about the underlying hardware, e.g., which instructions represent branches or calls. The reconstructed control flow is annotated with the information needed by subsequent analyses and then translated into CRL (Control Flow Representation Language), a human-readable intermediate format designed to simplify analyses and optimizations at the executable/assembly level. This annotated control flow graph serves as the input for microarchitecture analyses.

Value analysis

The value analysis determines ranges for values in registers and by this it can resolve indirect accesses to memory. aiT offers an add-on that actually lets you inspect the contents of all registers and memory cells at any program point in any execution context.

Cache analysis

The cache analysis classifies the accesses to main memory, i.e. whether or not the needed data resides in the cache. The categorization of memory references and memory blocks is described in the table below.

| always hit | The memory reference always results in a cache hit. |

| always miss | The memory reference always results in a cache miss. |

| persistent | The referenced memory block is loaded at most once. |

| unreachable | The code cannot be reached. |

| not classified | The memory reference couldn’t be classified as any of the above. |

For certain target processors, cache-related preemption costs can be taken into account by the so-called Useful–Cache-Block (UCB) analysis. The number of useful cache blocks at a particular instruction then serves as an upper bound for the number of additional cache misses caused by an interrupt/preemption at that instruction.

Pipeline analysis

The

pipeline analysis models the pipeline behavior to determine execution times

for a sequential flow (basic block) of instructions. It takes

into account the current pipeline state(s), in particular the resource occupancies,

the contents of prefetch queues, the grouping of instructions, and the

classification of memory references as cache hits or misses. The result

is an execution time for each instruction in each distinguished

execution context.

The

pipeline analysis models the pipeline behavior to determine execution times

for a sequential flow (basic block) of instructions. It takes

into account the current pipeline state(s), in particular the resource occupancies,

the contents of prefetch queues, the grouping of instructions, and the

classification of memory references as cache hits or misses. The result

is an execution time for each instruction in each distinguished

execution context.

Path analysis

Following from the results of the microarchitecture analyses, the path analysis determines a safe estimate of the WCET. The program’s control flow is modeled by an integer linear program, so that the solution to the objective function is the predicted worst-case execution time for the input program. A special mapping of variable names to basic blocks in the integer linear program provides for a computation of execution and traversal counts for every basic block edge.

Analysis of loops and recursive procedures

Loops and recursive procedures are of special interest, since programs spend most of their runtime there. Treating them naïvely when analyzing programs for their cache and pipeline behavior will result in a high loss of precision.

Frequently, the following observation can be made: the first execution of the loop body usually loads the cache, and subsequent executions find most of their referenced memory blocks in the cache. Hence, the first iteration of the loop often encounters cache contents quite different from those of later iterations. This has to be taken into account when analyzing the behavior of a loop in the cache. A naïve analysis would combine the abstract cache states from the entry to the loop and from the return from the loop body, thereby losing most of the contents. Therefore, it is useful to distinguish the first iteration of a loop from the others.

A method has been designed and implemented which virtually unrolls loops once, the so-called VIVU (virtual inlining, virtual unrolling) approach. Memory references are now considered in different execution contexts, essentially nestings of first and non-first iterations of loops.